Generative UI in Production: Lessons from a Pydantic-to-DSL Migration

Our agent dashboards used to take 30-40 seconds and cost roughly $0.50 per report. Today they stream in under 10 seconds for around $0.08 — and they're built on the same design system as the rest of the product. This is the story of a migration we expected to take a week and ended up rethinking from first principles.

A year ago, asking a CloudThinker agent for a cost dashboard meant watching a spinner. The agent would call a dashboard() tool, the model would fill in a strict Pydantic schema field by field, and 30-something seconds later — once the entire JSON tree validated — a fully-formed dashboard would snap into place. It looked great. It used the platform's design system. It was also slow enough that most users would tab away and forget what they'd asked for.

Today, the dashboard streams in. KPI cards render first with current-month totals. A line chart fills in as the agent emits it. A service breakdown appears as a bar chart. Everything still matches CloudThinker's design system. Total time: under 10 seconds. Total cost: about $0.08. The chart is interactive because it is the product, not a snapshot of it — click through to the expensive region and you drill in immediately.

CloudThinker agent streaming a cost dashboard artifact in real time — KPI cards, a line chart, and a service breakdown progressively rendering as the agent emits the DSL

~75% faster end-to-end

~84% cheaper per artifact

Progressive, line by line

Enforced by construction

This post is about how we got from the first version to the second — and, more importantly, why the change wasn't a micro-optimization. It was a category shift the whole industry made at the same time, and it took us two architectures to land on the right one.

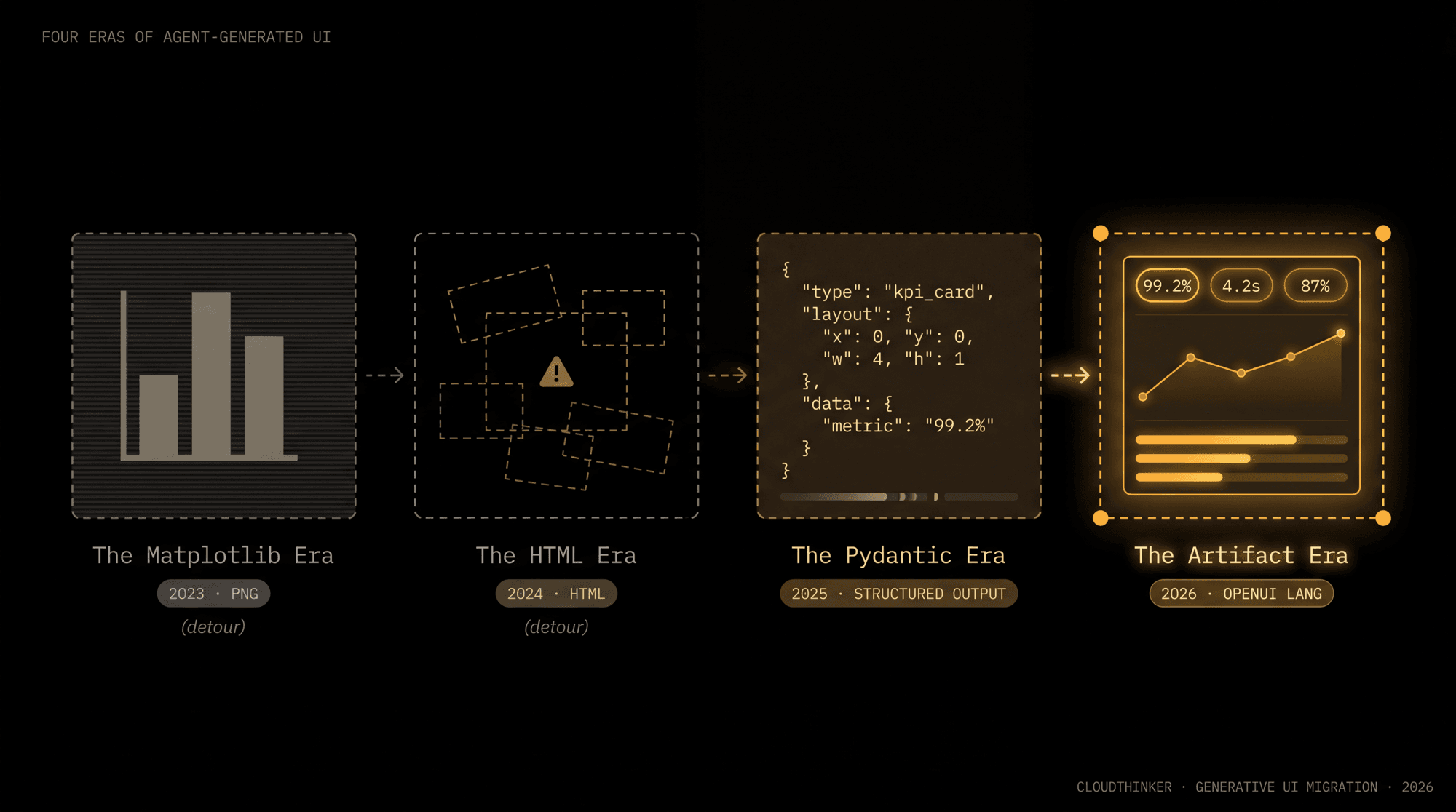

Act 0 — The Two Detours We Skipped

Before talking about what we built, it's worth being honest about what we didn't build. When we first sat down with the "agent generates dashboards" problem, the two obvious paths were already on the whiteboard. We crossed both of them out fast.

Matplotlib PNGs. The most popular pattern in 2023. Let the LLM write Python, run it in a sandbox, capture the PNG. Research from the MatPlotAgent paper (arXiv 2402.11453) is worth quoting: it "struggles to handle complex charts that require fine-grained numerical accuracy and precise visual-textual alignment of chart labels with plot components." A separate study, Are LLMs Ready for Visualization? (arXiv 2403.06158), documented that "numerous attempts to modify the default configuration of scatterplots and bubble charts were unsuccessful." Five runs of the same prompt give five different results. There's a 4-8 second cold-start tax on import matplotlib. PNGs aren't interactive, can't be themed without a fight, and are effectively invisible to screen readers — the ACM DIS 2025 GenUI Study called accessibility "a frequently-mentioned constraint" for generated output. And every chart means executing LLM-generated Python in a sandbox. The best way to not have a sandbox escape is to not execute the code in the first place.

Agent writes Python · sandbox executes · PNG embeds in chat

The agent writes Python, a sandbox executes matplotlib, and a PNG embeds in chat. Five specific failure modes compound: design drift, stochastic output, cold-start overhead, zero interactivity, and accessibility gaps.

Raw HTML / JSX freestyle. "Modern frontier models are great at React and Tailwind. Why don't we just let the agent write the UI directly?" Everyone has this idea. Hardik Pandya's essay Expose your design system to LLMs captures why it's a trap: LLMs "fabricate token names, drift on values within a session, lose all context between sessions, and never notice when the upstream library ships breaking changes." Token economics collapse — a single dashboard becomes 2,000-4,000 tokens of markup before a single data point. Half-streamed HTML is broken HTML, so progressive rendering is impossible. Every dashboard becomes a one-off; the design system is a suggestion, not a contract.

And then there's the security bomb.

Our injection vector wasn't hypothetical. Cloud resource names are user-controlled. A customer can tag an EC2 instance with an onerror attribute payload, and the next time our agent generates a dashboard referencing that resource, the tag flows through the LLM's output straight into dangerouslySetInnerHTML.

The agent isn't even "attacked" — it's a pass-through for attacker-controlled strings in the most structurally dangerous way possible. The OWASP guidance is blunt: treat LLM output as untrusted user input. The only durable fix is to make it structurally impossible for the schema to represent a <script> tag in the first place.

OWASP LLM05: Improper Output Handling is officially catalogued in the OWASP Top 10 for LLM Applications. Auth0's writeup puts it bluntly: "Improper Output Handling is the New XSS." The PortSwigger Web Security Academy LLM lab walks through the exact attack: a model is coaxed — directly or via indirect prompt injection — into emitting an onerror payload that ends up in dangerouslySetInnerHTML. The OWASP guidance is blunt: treat LLM output as untrusted user input. The only way to be sure a model can't emit a dangerous tag is to make the schema incapable of representing one.

We skipped both detours. What we picked instead seemed clever at the time — and it was, until it wasn't.

Act 1 — The Pydantic Era

A year ago, we shipped a dashboard() tool. The agent calls it with strictly typed Pydantic widgets — KPI cards, charts, tables — placed on a 12-column grid. The frontend renders them with the same React components the rest of the product uses. No Python. No HTML. No PNG. Just a typed object emitted through OpenAI's structured-output mode (or Bedrock's equivalent), with the model's decoding constrained against the schema.

The shape, lightly trimmed:

class KpiCardWidget(KPICard):

layout: LayoutConfig # x, y, w, h on a 12-col grid

type: Literal["kpi_card"]

section_id: str | None

class ChartWidget(ChartStructuredOutput):

layout: LayoutConfig

type: Literal["chart"]

# categories, datasets, axes, target_line, threshold_zones...

class TableWidget(TableStructuredOutput):

layout: LayoutConfig

type: Literal["table"]

# columns, rows, summary_row...

DashboardWidget = Annotated[

KpiCardWidget | TableWidget | ChartWidget,

Discriminator("type"),

]

class Dashboard(BaseModel):

title: str

widgets: list[DashboardWidget]

sections: list[DashboardSection] | None

time_context: TimeContext | None

The agent emits a Dashboard object via tool call. Discriminated unions guarantee every widget has a known shape. Validators enforce layout rules — KPI cards must be in row 0, chart width must be at least 3 columns, widgets sharing a row must belong to the same section — and feed structured error messages back to the model when something's off. The renderer is a single <DashboardTab> component that walks the tree and maps each typed widget to a real platform component (Highcharts inside Card containers, native tables, themed KPI cards).

This was a smart starting point. We knew matplotlib and HTML freestyle were dead ends, and constrained decoding gave us a real escape hatch. Specifically:

- Design consistency for free. Every chart is a Highcharts

<LineChart>from the design system. There is no "agent invented a new shade of primary blue" because the agent is selecting components, not styling them. - Zero XSS surface. The agent physically cannot emit

<script>. The schema has no field that lets it. - No code execution. No sandbox, no

import matplotlib, no Python interpreter on the hot path. - Validation is structural. Bad widget = clean error message back to the agent ("KPI card width must be 3, 4, or 6"). The agent fixes itself most of the time without a retry loop.

- Auditable. Dashboards persist as typed JSON in PostgreSQL. Every report a customer ever saw is reconstructable.

For about ten months, this was our answer. It shipped, customers used it, and it didn't break. But by month six the cracks were obvious to anyone watching the latency dashboard.

Token economics. A four-widget dashboard was 1,500-3,000 tokens of JSON before a single data point. Discriminated unions are verbose by nature: every widget repeats type, layout: {x, y, w, h}, section_id, and the full nested chart config. Multiply by a long-running investigation that emits multiple reports, multiply by users, multiply by frontier-model output pricing. The line item showed up.

No real streaming. This is the one that hurt the most. Constrained decoding makes the LLM wait until the entire JSON tree parses cleanly before it commits a single token to the output. Structured-output mode (Pydantic schemas, JSON Schema, function-call parameters — they're all the same shape) fundamentally trades streamability for validity. The user sees a spinner for 30+ seconds, then the whole dashboard appears at once. Wall-clock latency was OK by 2025 standards. Perceived latency was the worst part of the product.

Schema rigidity. Want a new chart type? Add a discriminated variant. Update the validator. Regenerate frontend types via pnpm gen:local. Ship a backend release. Ship a frontend release. A new component took a sprint. A new layout pattern took half a sprint. Every time the design team wanted to try something, the answer was "yes, in the next release."

One artifact per conversation. The tool was modeled around create_dashboard / add_widgets / update_widgets. A single artifact lifecycle. Agents that wanted to emit multiple small reports in a single thread had to overwrite the previous one or skip.

Pixel arithmetic instead of intent. A Stack(KPI, KPI, Chart) layout had to be expressed as four absolute coordinates ({x: 0, y: 0, w: 4}, {x: 4, y: 0, w: 4}, {x: 8, y: 0, w: 4}, {x: 0, y: 1, w: 12, h: 3}). The agent burned tokens reasoning about grid math instead of describing what it wanted to show. We had a half-page of layout examples in the tool description that the agent had to re-read on every call.

End-of-Act-1 scoreboard: 30-40 seconds wall-clock, roughly $0.50 per report. Functionally correct, design-system-consistent, secure — and unit economics that didn't pencil out for what we wanted to build next.

Act 2 — The DSL We'd Been Dreaming About

We'd been talking about "what if the agent emitted a tiny language instead of a typed JSON tree" for months. We even sketched a grammar on a whiteboard once. Then we kept shipping other things, and the dashboard kept being good enough.

What changed in early 2026: the rest of the industry caught up to where we'd been doodling. Generative UI became its own category, with multiple serious implementations converging on the same basic idea — and several of them shipped to production while we were heads-down on other features.

- Vercel AI SDK 3.0 introduced

streamUIin early 2024 — stream React Server Components from LLM tool calls. Worth weighing: per Vercel's own discussion thread, "Development of AI SDK RSC is paused. We recommend using AI SDK UI." Even Vercel couldn't make "stream React components from the server" work well enough to recommend for production. - LangChain / LangGraph Generative UI maps tool calls to pre-registered React components with type-safe streaming. The

assistant-uilibrary provides an out-of-the-box renderer with progressive updates during long-running graph execution. - Thesys C1 is an OpenAI-compatible endpoint that outputs UI components instead of text. Ask it "show me monthly revenue trends" and get a rendered line chart instead of a paragraph. See also their guide to building Generative UI applications.

- CopilotKit AG-UI is a bidirectional agent↔UI event protocol — adopted by Google, LangChain, AWS, Microsoft, Mastra, and PydanticAI, with over 120K weekly installs as of early 2026.

- Google A2UI is Google's declarative spec for agent-generated UIs. Google Research has also published their own generative UI work.

- OpenUI Lang takes the most opinionated line: a compact, line-oriented DSL designed for LLMs to stream UI structure directly. Their published benchmarks report 60–67% fewer tokens than JSON-based alternatives and up to 3× faster render on deeply nested UIs.

CopilotKit's framing is useful. They describe three patterns of Generative UI: Controlled (high structure, low freedom), Declarative (shared control), and Open-ended (agents produce arbitrary UI). Our Pydantic Era was firmly Controlled — we just picked a payload format that didn't stream. The fix was to keep the Controlled posture and swap the payload.

The pattern underneath all of them

Peel back the branding and these projects agree on more than they disagree on. Every production-leaning approach has landed on the same four properties:

- A closed component vocabulary. The agent can only emit things the renderer already knows how to draw. No arbitrary markup.

- A typed, structured payload. JSX, JSON, YAML, or a custom DSL — but always something a parser can validate before it reaches the DOM.

- Streaming-friendly grammar. Partial output has to be meaningful. Half an HTML tag is broken; half a tool call or half a DSL block should still render something.

- Renderer-owned styling. The agent describes intent (

status: "success"); the renderer decides which shade of green.

The shape of the payload varies — tool calls (Vercel/LangChain), JSON schemas (Thesys), event protocols (AG-UI/A2UI), or line-oriented DSLs (OpenUI). But the contract is identical: agents describe, renderers render. The whole category moved this direction because the alternative — letting the agent write the view layer — was strictly worse on every axis that mattered.

OpenUI Lang vs. JSON-based UI Formats

OpenUI Lang's published benchmark shows 67.1% fewer tokens than Vercel JSON-Render, 65.4% fewer than Thesys C1 JSON, and 61.4% fewer than YAML across seven real-world UI scenarios. For deeply nested dashboards the gap grows to 3.0× — the main reason we picked it for CloudThinker's Artifact system.

The token economics drive a lot of the architectural choices. JSON is verbose. YAML is slightly less so. Line-oriented DSLs are the most compact of the common options, and that gap compounds: shorter payloads mean smaller system prompts, fewer output tokens, faster streaming, and more predictable costs. A dashboard that a JSON-based renderer takes ~14 seconds to finish can stream in ~5 seconds with a DSL grammar designed for progressive parsing.

Why we didn't roll our own

Designing a DSL is the easy part. Building the parser, the validator, the streaming render path, the error messages, the few-shot examples, the documentation — that's months. Every serious GenUI project has converged on the same four properties independently. Picking off the shelf was strictly better than re-deriving the same wheel.

We evaluated against three things that mattered to us, in order:

- Token economics. We already knew the Pydantic JSON was too verbose. Whatever we picked had to be measurably smaller.

- Streaming-first grammar. Partial parse must render something — that was the lesson from the Pydantic era's spinner problem. If the format requires a closing brace before it's valid, it disqualifies itself.

- Maps cleanly to a closed component vocabulary we already own. We didn't want to throw away the design-system components from the Pydantic era. Whatever we picked had to be a payload format we could point at our existing renderers.

OpenUI Lang scored highest on all three. The 60-67% token reduction over JSON-based payloads was the visible win, but the hidden win was the line-oriented grammar — each kpi = KPICard(...) is an independently parseable statement. Half a dashboard renders as half a dashboard, not a spinner. We adapted the OpenUI vocabulary to our FinOps-flavored component palette and built our Artifact system on top.

Act 3 — How CloudThinker's Artifact System Works Now

The Artifact system is the third iteration, not the answer that fell from the sky. The agent calls an artifact() tool. The tool accepts structured parameters — type, title, description, and a body containing a compact, line-oriented DSL block (inspired by OpenUI Lang, adapted to a FinOps-flavored component palette). The body is validated against a component whitelist, stored in PostgreSQL, and streamed to the frontend.

Agent → OpenUI Lang → Many Surfaces

The agent emits typed DSL inside an artifact() tool call. The parser enforces a component whitelist. The body is stored as plain text in PostgreSQL and rendered to multiple surfaces — React for web, WeasyPrint for PDF — from the same source.

A concrete example. This is the body an agent emits for a Q4 cost dashboard — note how each line is a complete component assignment, parseable and renderable on arrival:

kpi_spend = KPICard("Total Spend", "$12,450", "-8%", "success")

kpi_save = KPICard("Savings", "$2,400", "+12%", "success")

trend = LineChart(

["Jan", "Feb", "Mar"],

[Series("Cost", [13500, 12450, 11800])]

)

breakdown = BarChart(

["EC2", "RDS", "S3"],

[Series("Cost", [5200, 3100, 1800])]

)

kpi_row = Stack([kpi_spend, kpi_save], "row")

root = Stack([

kpi_row,

Card([CardHeader("Cost Trend", "Monthly spend trajectory"), trend]),

Card([CardHeader("By Service", "Current month breakdown"), breakdown])

])

That's the entire payload. Compare it to the equivalent Pydantic Era JSON — same dashboard, but with type discriminators, layout: {x, y, w, h} per widget, full chart-axis configs, and section_id references. Same information, several times the tokens.

The component palette is deliberately small. Agents can emit:

- Layout:

Stack,Card,CardHeader,Divider - Data:

KPICard,Table,TextContent,Callout,Tag - Charts:

BarChart,LineChart,AreaChart,PieChart,RadarChart,ScatterChart,SingleStackedBarChart - Advanced:

GaugeChart,Treemap,Heatmap,FeatureMatrix,ProsCons,ScoreTable

That's the entire vocabulary. An agent physically cannot emit a <script> tag, a rogue <div>, a fabricated color token, or a chart library we haven't vetted. The OWASP LLM05 attack surface is gone by construction, just like in the Pydantic era — but now with progressive streaming and ~70% fewer tokens.

The frontend renders the DSL with a registered component library (Highcharts for charts, native components for everything else). The same DSL also feeds a WeasyPrint-backed HTML renderer that produces PDFs — same body, same output, different surface. Write once, render everywhere.

| Dimension | Matplotlib(detour) | Raw HTML(detour) | Pydantic Era | Artifact Era |

|---|---|---|---|---|

| Output format | Binary PNG | HTML markup | Typed JSON tree | Line-oriented DSL |

| Streaming | No — atomic | Half-HTML is broken | Wait for valid JSON | Yes — line by line |

| Interactivity | None | Arbitrary (risky) | Componentized | Componentized |

| Design consistency | matplotlib defaults | Drifts every session | Design-system locked | Design-system locked |

| Security (XSS) | Sandbox exec risk | OWASP LLM05 exposure | Unrepresentable | Unrepresentable |

| Output tokens | ~Python code | 2k–4k / report | 1.5k–3k / report | 60–70% less than JSON |

| Schema evolution | Code-only | Free-for-all | Sprint per new widget | Add to component palette |

| PDF export | Native (already image) | Fragile (headless browser) | Custom renderer | Same DSL, static renderer |

| Debuggability | Opaque binary | Opaque DOM | Verbose JSON diff | Plain-text, diffable |

Five Lessons from Production

Lesson 1: Constraints Are the Product, Not the Limitation

The Pydantic era taught us this lesson once. The Artifact era taught it to us a second time. Both architectures work for the same underlying reason: the agent doesn't get to invent the visual layer. It picks from a vocabulary we control. The two architectures differ on format — typed JSON versus line-oriented DSL — but they agree on the contract: the agent describes intent, the renderer owns the pixels.

When we first proposed a whitelist of components, the obvious pushback was: "Won't that limit what the agent can do?" Yes. That's the entire point. Every dashboard the agent produces inherits CloudThinker's design system for free — because it's literally rendered by the same components the rest of the product uses. There is no drift because there is no vocabulary to drift within.

The ACM DIS 2025 GenUI Study found that 37 UX professionals, after a week with state-of-the-art GenUI tools, agreed "GenUI quality was not production-ready and would require further tweaking and polishing" — with accessibility and domain-specificity as the main complaints. Both are artifacts of Open-ended generation. In a Controlled pattern with a whitelist, accessibility is a property of the component (we audit LineChart once), and domain-specificity is the entire reason the vocabulary exists.

If the agent wants a new kind of component, we add it once. Not per prompt. Not per session. Once.

Lesson 2: Streaming Changes UX More Than You Think

You can't stream a PNG — it's atomic. You can't safely stream raw HTML — half-rendered markup is broken markup. You can stream a line-oriented DSL, because every line is a complete, parseable component. A dashboard that takes JSON 14.2 seconds to finish streams in OpenUI Lang in 4.9 seconds — not because the model is faster, but because the grammar was designed for progressive rendering from day one.

This is the lesson the Pydantic era couldn't teach us. Constrained-decoding JSON is correct, but it's a wall — the model commits nothing until the tree validates, and the user stares at a spinner. A line-oriented grammar streams differently from anything else. As each line of kpi = KPICard(...) arrives, the renderer can immediately show that card. The user sees a skeleton, then KPIs, then charts, then tables — progressively, in the order the agent generated them. Perceived latency drops more than actual latency does, because the user has something to look at from the second second onward.

You cannot do this with a PNG (it's atomic). You cannot safely do this with raw HTML (half-streamed HTML is broken HTML). You cannot do it with JSON-based payloads either (the tree isn't valid until the closing brace, and constrained decoding makes the model wait for it). You can do it with a grammar where each line is independently parseable. Streaming isn't a feature you bolt on to the output format — it's a constraint the output format has to satisfy from day one, and it's why every serious GenUI project has ended up rethinking the payload shape.

Lesson 3: Token Economics Compound at Agent Scale

Per-request savings don't matter at low volume. They matter enormously at agent volume. When the same agent generates many artifacts in a single long-running investigation — dashboards, tables, sub-reports, one-offs — the per-artifact token delta turns into a per-user cost delta, and the per-user delta turns into a unit-economics story.

The Pydantic era taught us that a strict schema is necessary but not sufficient. JSON costs you tokens you didn't budget for: every widget repeats its discriminator, every layout repeats its grid math, every chart repeats its axis config. Published benchmarks across the GenUI category show DSL-based payloads running 60-67% fewer tokens than JSON-based alternatives for the body alone. But the bigger win isn't the payload tokens — it's the instruction tokens. Teaching the agent a small vocabulary is much shorter than teaching it "how to fill in our dashboard schema correctly." The system prompt shrinks. The few-shot examples shrink. The agent has less to think about, so it thinks faster.

The hook numbers at the top of this post — 30-40 seconds down to around 10 seconds, ~$0.50 per report down to ~$0.08 — are the compounded effect of all of this. Smaller output, shorter system prompt, faster rendering, no waiting on constrained decoding to finish a JSON tree.

Lesson 4: One DSL, Many Surfaces

Same artifact body, multiple renderers:

- Web: React components (Highcharts inside

Cardcontainers) - PDF: WeasyPrint-friendly HTML, for digest reports and audit exports

- Future Slack / email: a straightforward mapping to Slack Block Kit or MJML templates

You cannot do this with matplotlib (one output: PNG). You cannot do this with hand-written React (tightly coupled to a browser runtime). The Pydantic era was halfway there — the JSON tree was renderer-agnostic in principle, but in practice the React renderer and the PDF renderer drifted because the schema was too rich to fully cover twice. The DSL is tight enough that both renderers stay honest.

This also turns out to be how we handle editing. A user says "make the chart bigger." The agent doesn't re-generate from scratch — it produces a diff against the existing DSL, and the renderer re-paints. The DSL is a first-class document, not a one-shot output.

Lesson 5: Debuggability Is a Superpower

Artifacts are stored as plain-text DSL in PostgreSQL. This has three properties we didn't plan for but can't live without:

- Diffs. "Why did the agent pick a bar chart here instead of a line chart last week?" is a

SELECTquery followed by a text diff. The Pydantic era's JSON dumps were technically diffable too, but the noise-to-signal ratio was bad — every diff was 80% positional reshuffling. - Replay. Loading a past artifact and re-rendering it costs nothing. No code execution, no sandbox, no model call. Useful for bug reports and regression tests.

- Auditability. Every report is a human-readable, version-controllable document. For customers in regulated industries, this matters a lot.

Contrast: if your agent output is a PNG, good luck explaining to a compliance auditor why the chart looks the way it does.

What We'd Do Differently

The honest retrospective:

The first migration is the one you should expect. The most expensive lesson from the Pydantic era was assuming we'd get the format right on the first try. We knew matplotlib and HTML were dead ends and we picked the next-most-obvious thing — strict typed JSON via constrained decoding. It worked. It was also a stepping stone, not a destination. If you're building a Generative UI system today, budget for at least one rewrite of the payload format. Preferably plan for it from the start.

Ship the DSL spec first, then the components. We built components opportunistically the second time around — BarChart when we needed a bar chart, GaugeChart when we needed a gauge. In hindsight we should have started by writing down the entire intended vocabulary as a locked spec, validated it against a handful of target reports, and then built components. The irony: we did exactly this with Pydantic schemas the first time. Doing it twice would have been smarter than doing it once perfectly.

Prompt engineering is most of the work. The DSL is trivial — a few dozen components, a few hundred lines of parser. What took the longest was teaching the agent when to use a KPICard versus a Callout, when a GaugeChart is better than a single-value metric, when to group cards into Stack("row") versus a vertical layout. The Thesys guidance on building Generative UI applications turned out to be more useful than we expected — the design patterns matter more than the framework.

Validation errors need to be LLM-friendly. Our first parser returned Python tracebacks to the agent when the DSL was malformed. The agent got confused by the tracebacks and hallucinated more errors trying to fix them. Now the parser returns structured hints — "You called LineChart without a labels array. Expected: LineChart(labels, series). Did you mean: LineChart(['Jan', 'Feb'], [Series('Cost', [100, 200])])?" Error messages are prompts. Treat them as such. (The Pydantic era got this right by accident — Pydantic's structured error messages are already pretty good.)

Don't skip the PDF renderer. Building the WeasyPrint HTML renderer in parallel with the React one forced us to keep the DSL truly declarative. Any component that secretly needed client-side JavaScript to render was a leak — and having a second renderer exposed every leak immediately. If you're building a Generative UI system and you only target the browser, you'll accrue hidden coupling you won't notice until the first time you need to export.

What's Next — From Snapshots to Live Workspaces

Today's artifacts are honest read-only documents. The agent emits the DSL, the renderer paints it, and that's where the conversation ends. You can look at the dashboard, but you can't do anything to it — every follow-up question kicks off a fresh generation pass and a new artifact appears next to the old one. It works, but it treats the artifact like a printout rather than a workspace.

The next iteration we're building flips that. Artifacts become stateful surfaces the user and the agent share, not snapshots the agent throws over the wall. Three concrete shifts:

Bidirectional artifacts. Click a region on the cost map and the agent drills in. Drag a date range on a line chart and the agent re-runs the underlying query against the new window. Hover a row in a service breakdown and the agent surfaces the related anomalies. The artifact stops being a destination and starts being an input — every interaction is an implicit prompt that the agent picks up on the next turn.

Editable artifacts via DSL diffs. "Make the time range 90 days" should not require a full regeneration. The agent emits a small DSL diff against the existing artifact, the renderer re-paints in place, and the audit log records the delta. Artifacts become version-controlled documents — you can branch them, replay them, and trace exactly which agent changed which panel and why.

Embedded controls as first-class DSL primitives. New components — Filter(), DateRange(), Toggle(), Slider() — that the agent can drop into an artifact and the renderer wires up to a re-run. The DSL graduates from a layout language to an interaction language, but the contract stays the same: the agent describes intent, the renderer owns the pixels and the events. The PDF renderer degrades each control gracefully — a DateRange() becomes a static caption in print, a button in Slack, a live picker in the browser. One body, many surfaces, all of them alive.

The deeper bet: Generative UI is going to look obvious in 18 months, the same way streaming chat responses look obvious now. The surface area of "what the agent produces" is going to keep getting smaller and more semantic, and the surface area of "what the product renders" is going to keep getting richer. The line between them is the contract that matters — and as Roger Wong put it, we're heading toward interfaces that exist for a single moment, composed on demand, thrown away after.

Try It

CloudThinker's agent-generated dashboards are live at app.cloudthinker.io. Ask any agent "show me my cloud spend by service" and watch the artifact stream in. The same DSL powers the dashboards you see in chat, the PDF digest reports that land in your inbox, and the audit exports. One body, many surfaces.

A quick shout-out to the teams pushing Generative UI forward: Vercel for shipping the first widely-used streaming UI primitive, LangChain for the graph-native approach, Thesys for the category-defining work on C1, CopilotKit for the AG-UI protocol, Google for A2UI, and OpenUI for pushing DSL-based streaming further than anyone else. The ecosystem is better because of all of them — and the pattern is only going to get more prevalent from here.

Further reading: OpenUI Lang Benchmarks · CopilotKit: The Developer's Guide to Generative UI in 2026 · OWASP LLM05: Improper Output Handling · ACM DIS 2025: The GenUI Study · Generative UI and the Ephemeral Interface